What's In Store?

Memories of Weird Memories, of Computers Past.

It is tempting to spend a lot of time in this article marveling over how small and cheap computer storage has gotten versus the old days. In the 1980’s when I worked for Digital Equipment Corporation, my first job was to design memories for a mainframe computer. The system we were building could theoretically have up to 1GB of memory, but we laughed at this idea at the time, because it would require multiple refrigerator-sized cabinets and millions of dollars to build it, and who needs a gigabyte of memory, anyway?

Now of course, a USB drive in the back of your desk drawer that is only 1GB gets thrown out for being too small, and a suitable replacement can be found for under $10 at the supermarket checkout stand. I think though that is all the time I’ll devote to talking about this remarkable progression. Although it still boggles my mind every time I think about the comparison, it’s a thoroughly covered topic.

Instead I wanted to rewind even further back, and look at storage systems that are even older than the (admittedly old) stuff I usually write about. Some of these I’ve actually used, but some I’ve just learned about over the years.

It Worked On Paper

Paper as a storage medium has been around a long time. Paper rolls to control musical instruments such as pianos date back to the mid 19th century. IBM got its start building machines that improved upon even older paper-card tabulators from the 1800’s, expanding the size and thus storage of the existing 22-column cards to 80. They also changed the holes along the way from round to rectangular, and the IBM punch card was born. (Also, fun fact, the reason old CRT terminals have 80 columns of text characters)

This format ruled the early information age from the 1920’s all the way up into the 1970s. (IBM says in the link above that these cards stored all of the worlds information in the first half of the 20th century, but I think they are forgetting about books.)

I had a brief stint of writing programs on these cards in a COBOL class in the 70’s at our high school. Using cards as a programming storage medium was already becoming outmoded by then, but still alive and well in the not-so-cutting-edge public school system I was in.

The two things I remember about it are, first, the unforgiving nature of making a mistake, which did not allow for a delete key to un-punch a hole, you just started a new card. The second is what happens if you drop your card deck. Every line of your program scatters to the floor in random order, and re-sequencing them was a pain. Each program statement would have a line number on the card so it could be figured out, but reading hundreds of cards and shuffling them around was tedious.

If you were lucky, you could use something like an IBM card sorter to do it, but unlikely this critical data processing center equipment would be available for your use in reassembling a deck. The pros therefore took a big magic marker and drew a diagonal stripe down the side of the deck, so you can visually spot cards out of order.

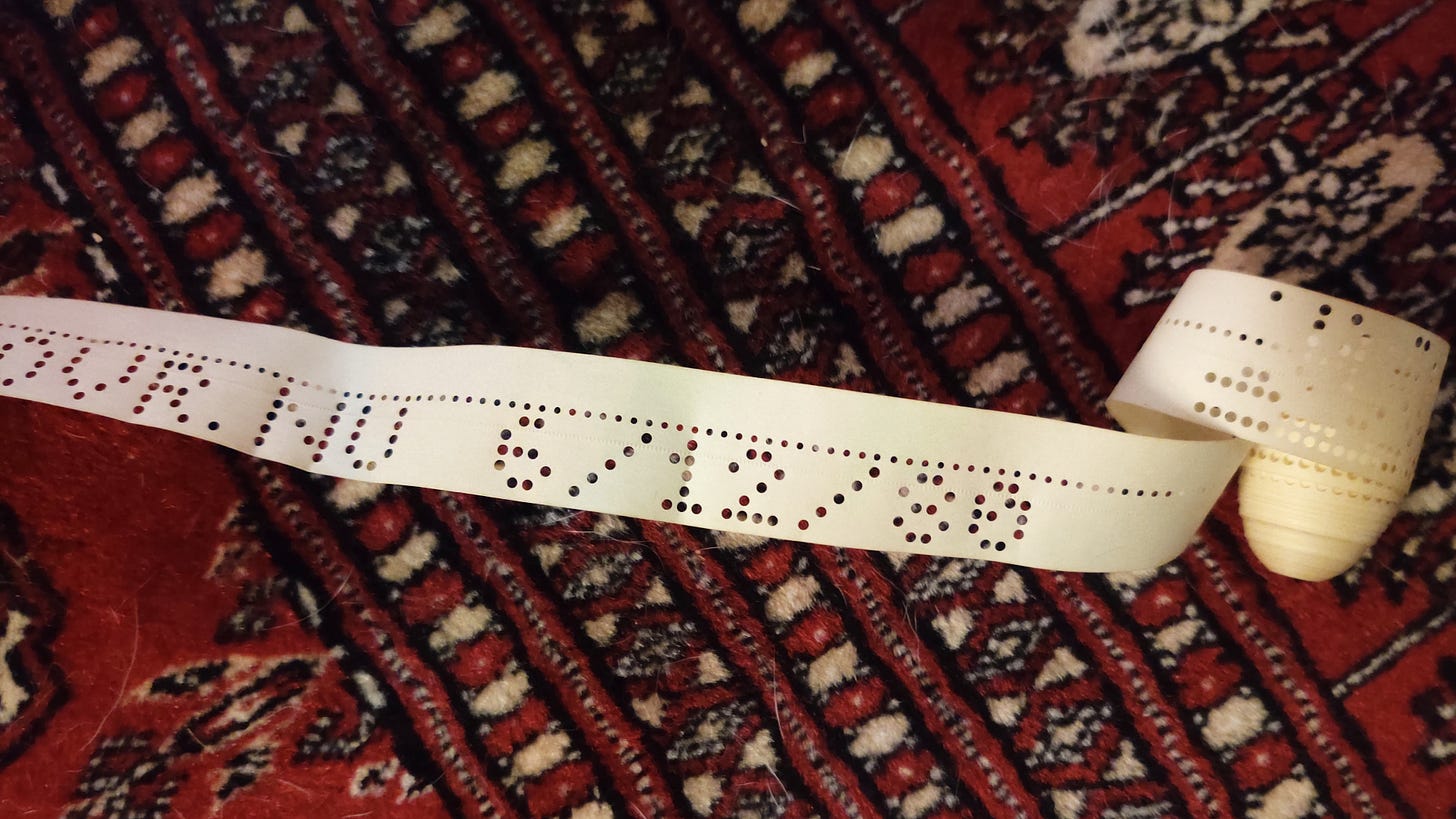

I spent more time in the 70’s with another paper format, paper tape. A paper tape punch was a common peripheral that was attached to teletype machines, such as the Model 33 I used in school to write BASIC programs. The punch and reader both showed up as devices in the equivalent of /dev, and much like UNIX you could just redirect a program onto or off of paper tape.

Punching and reading were dead slow, due to the mechanical speed limitations and also the fairly basic encoding used (6 bit ASCII). But it was a true read/write format, even if you could not erase and reuse the medium. And a lot easier to manage than cards since no typing was involved.

Last paper storage device I’ll mention is the printer. You might not think of a printer as a storage device, but it was very common in the 70’s and even 80’s to share programs by printing them. The printer was the ‘writer’, and the ‘reader’ would be the guy having to type it back in, which was of course very slow. But many magazines and books of the time would publish programs in print to share with their readers, and I spent more than a few hours typing in BASIC programs from print, then trying to find all my typos.

I recently found a printout of a program I wrote when I was 17, stuck in an old Computer Gaming World magazine from the 80’s. It was a game I wrote, and I decided to resurrect it and see if I could get it running. (Spoiler is, I did - but I will be covering that one in another post.) The thing I find amazing about paper storage format is its longevity. Card decks and paper tape punched decades ago is still quite viable, provided you can find a way to read it, and provided it did not get wet at any time.

Who’s Going To Hold My Bits?

Paper is all well and good as a storage medium for the mechanical computer age, but when electronic computers came around in the 1940’s, a faster solution was desirable, at least for main memory, or “store” as it was more commonly referred to back then. If you think of punch cards as being the large-capacity “disk storage” of the day, it still left a need for a smaller, faster storage device for programs and data that could keep up with the processor (like dynamic ram systems of today).

Mechanical solutions were out — and electronic ones in. But there was a problem of scale, since integrated circuits were still twenty years away, and even the transistor had not been invented yet. Register circuits made out of tubes could be constructed, but it would take at minimum 4-5 tubes and associated discrete components to store a single bit. This was fast but required a lot of space, and a lot of power. Tens of thousands of tubes just to store a kilobyte of data, creating all sorts of cost, power, thermal, and reliability problems.

The motivation to build a smaller storage device was high, so they got creative about where they could keep all their bits around for a while. One of the earliest solutions was, to use a wire. Slightly more complex, but basically, a wire. The idea was based off of the principal of wave propagation, where an electrical pulse would take time to travel down a conductor, and if the conductor was long enough, several pulses representing bits could be stored in the wire as they travelled.

This technical area is also referred to as transmission line theory, and it later haunted systems engineers like me, because the effects of signals propagating in wires can lead to delays and even reflections that cause timing and signal integrity problems for fast circuits. The computers of the 1940s though were slow enough that many of these issues were not a problem then.

This is not to say that delay line memory as it was called, was an easy thing to engineer. There were many problems, the first of which being that a wire, even a long wire, does not store information for very long. They got around this by building even slower propagation circuits, using chains of capacitors to form an electric delay line. It could not store a lot of bits, but was relatively cheap and faster than mechanical storage.

Then things get really strange. The next invention in this area was something called a mercury delay line, which was basically a big tank of liquid mercury with a piezoelectric crystal on one end, and a microphone on the other. The crystal would produce a tight-beam sound wave that would propagate through the mercury, which has a very high propagation rate for sound vs. air or water (1450 m/s vs. 340 m/s for air).

Pulses of sound would be transmitted into the tank as a serial stream, and read out the other end, amplified, modified, fed back in. Length of the tube had to be carefully selected so the cycle time of the system was in line with the CPU clock rate, and multiple tubes were needed to store anything more than a hundred or so bits.

Remember What You Saw on TV?

By the early 1950’s computers were getting complex enough that they needed more storage, and faster storage. Delay lines were rather limited in capacity, and the latency of electrical or sound waves propagating through some medium created a fundamental access time limit that was getting in the way of CPU throughput. Add to that the issues with the serial aspect of memory access, and don’t even start to think about the toxicity issues of having all that mercury around.

Long way of saying, computer engineers of the day were not satisfied yet (they never are, trust me) and went on to find other weird and wacky ways to store data, even in the absence of semiconductors.

The next thing they came up with is one of my personal favorites, something called the Williams-Kilburn Tube — Invented in 1948 by (surprise) Fred Williams and Tom Kilburn. This device was basically a CRT tube very similar to one you would find in an old television, and bits of data could be projected as dots on an x/y grid of the tube. Although some tubes were coated inside with phosphor, it was not the light from the dots that held the state of the bits, but rather the secondary electrical charge produced on the screen when the electron gun fired.

The charge for any particular dot that was written would take a while to decay, and in the intervening time, a detector plate in front of the CRT would read the values of the dots, and write any “ones” that were fading back to full charge strength before the charge was depleted. If that sounds really hacky / Rube Goldberg-like, keep in mind that this principle is very similar to modern-day dynamic ram, which stores charge temporarily and also requires a refresh cycle to occur periodically to rewrite values.

Anyway this memory had all sorts of issues with calibration, wear-out, and other things, but it was arguably the first electronic random-access memory invented, which had huge advantages over older approaches like delay lines. Williams-Kilburn tubes were successfully used on several commercial computers made by IBM and others in the late 1940’s and early 1950’s.

Core Capabilities

The real game-changer of the pre-semiconductor storage scene though would come in 1951 or so, when magnetic core memory was developed by An Wang and others. Core was a technology consisting of a matrix of donut-shaped ferrite beads that could hold state based on the polarity of a magnetic field that was induced into each one, via row and column wires strung through the beads.

Like the Williams Tube, core memory was randomly-accessible, but faster and generally more reliable, and with a greater information density. The amount of power needed to operate a core memory was generally flat regardless of the size, unlike its predecessors, and larger, multi-kilobyte systems were feasible with the technology. But there were a couple of downsides.

The first issue was that ferrite beads were expensive to make, and had to be hand strung, one bit at a time. Armies of factory workers, usually women because of their smaller hands and fine motor skills, worked to assemble these systems from the 1950’s, all the way into the mid 1970s when semiconductor RAM systems took over. Computers in general were hand made back then, and also very expensive though - so a little extra expense for core was not too big a deal, given the increased capability afforded by it.

The second issue with core was, the process used to read the ferrite beads also reversed the magnetic field, effectively erasing the state of the bit. This is where An Wang comes in though, because he contributed a circuit solution for this problem that introduced a read-then-write cycle for core access, kind of a discrete refresh that only takes place when the memory was read.

Despite any costs or quirks, core was a commercial hit, and many computers, including the circa 1974 PDP-8 I used to punch that paper tape in the picture above, ran on core (a 32K array, if I remember right).

Another interesting property of core was that it is a non-volatile system, unlike most of its predecessors. The magnetic field in each ferrite bead is quite durable, and unless the core system is exposed to a strong magnetic source, the state of the memory should last almost indefinitely, even with no power.

That means that all those old computers in storage somewhere, in museums, in landfills or wherever they may be, likely still contain the last program they ever ran. And If you could find a way to power one on, that code could actually run again.

Spooky.

After core came MOS and CMOS memory in the form of static and dynamic RAM, EEPROMS, and a few oddball things like magnetic bubble memory that never caught on. But for the most part, memory systems of the 1970’s are very similar to the ones we have today. Smaller, faster, with a host of innovative enhancements, but essentially the same base technologies.

As mentioned I spent my early hardware engineering days designing memory systems, and I’m just a little sad I didn’t get to work on any of these weird and wonderful beasts that came before my time. But I’m a little hopeful that a new, weird age of storage may soon be upon us.

Things like quantum computing will likely bring back strange ideas to design of storage systems. I know close to nothing about quantum computing or physics, except that it tends to break traditional rules, like things cannot exist in two states at the same time. Sounds like a great potential for awesome new storage systems we haven’t even thought of yet.

Taking advantage of new technology like this may require coming up with a whole new crop of weird ideas. But sometimes, weird changes the world.

Explore Further

Computer Gaming World Museum (full issues with printed programs you can have fun typing in!)

Next Time: I perhaps risk venturing into advice column territory, as I share some things learned over 30-something years working in the tech industry. My pearls of wisdom coming up next in: An Old Hacker’s Tips on Staying Employed

Enjoyed this post? Why not subscribe? Get strange and nerdy tales of computer technology, past present and future - delivered to your inbox regularly. It’s cost-free and ad-free, and you can unsubscribe any time.

The Mad Ned Memo takes subscriber privacy seriously, and does not share email addresses or other information with third parties. For more details, click here.